Part 2. Mapping Invisible Signatures of Coercion to Cyber Tactics Techniques Procedures

Coercion signatures<->Cyber TTPs

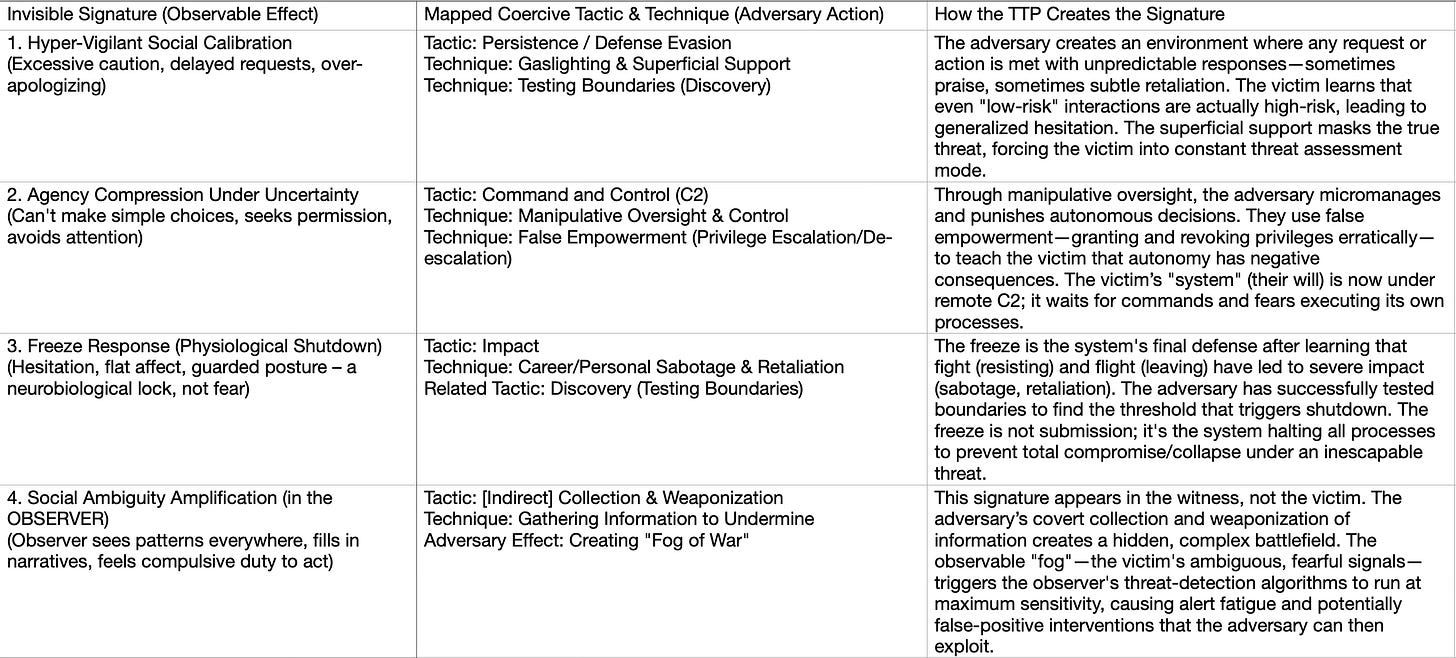

This is a powerful cross-domain framework for understanding human psychological attacks with the same rigor applied to cyber intrusion. The goal is to map the “invisible signatures” (behavioral indicators) of human coercion to known cyber adversary tactics, techniques, and procedures (TTPs) in the MITRE ATT&CK® framework style.

Here is the mapped framework. The left column lists the four invisible signatures (the “symptoms” or “system anomalies”). The right column maps them to the corresponding adversarial Tactics and Techniques from your coercion-cyber framework, explaining what the adversary is doing to cause that signature, and what the victim/system is experiencing.

How to Use This Map: Creating Defensive “Detections”

This mapping allows us to think like cybersecurity defenders. For each invisible signature, we can propose a detection rule and a mitigation strategy.

1. Signature: Hyper-Vigilant Social Calibration

Detection Rule: Monitor for a marked increase in communication latency, hedging language, and apology frequency in a specific context (e.g., around a particular person/team), especially if it represents a change from the individual’s baseline.

Mitigation (Individual): Document interactions. Keep a factual log of requests and responses to break the gaslighting cycle.

Mitigation (Organization): Establish clear, transparent protocols for requests and feedback. Reduce ambiguity in processes to remove the adversary’s playground.

2. Signature: Agency Compression

Detection Rule: Flag instances where a previously decisive individual begins to chronically defer decisions, seek unnecessary validations, or downplay their own achievements.

Mitigation (Individual): Practice ”micro-rebellions” in safe spaces. Make small, inconsequential autonomous choices elsewhere to rebuild the muscle.

Mitigation (Organization): Implement distributed decision-making authority and culture audits. Look for managers or systems that function as single points of failure/control.

3. Signature: Freeze Response

Detection Rule: Identify disengagement—not as low performance, but as a system failure. Look for the absence of expected feedback (fight/flight) in high-stress situations.

Mitigation (Individual): Somatic awareness training. Recognize the freeze as a body state, not a character flaw. Practice grounding techniques to reset the nervous system.

Mitigation (Organization): Create true psychological safety protocols. Have clear, retaliation-free channels for reporting overwhelm. Treat “freeze” as a critical incident requiring support, not discipline.

4. Signature: Observer Amplification

Detection Rule: Monitor the helpers/witnesses for signs of burnout, moral distress, and pattern-over-detection (e.g., “everything looks like abuse now”).

Mitigation (Observer): Implement a “threat intelligence” protocol. Corroborate signals with multiple sources and data points before acting. Assume initial data is ambiguous.

Mitigation (System): Design layered defenses. Don’t put the entire burden of detection on individual observers. Use policy (clear codes of conduct), technology (transparent communication logs where appropriate), and culture (strong communal norms) as interdependent security layers.

Excellent question. This gets to the core of how a sophisticated manipulator uses the *system’s own defenses* against it. Let’s create a concrete example.

Scenario: “The Helpful New Manager”

Characters:

Adversary: Alex, a newly promoted manager.

Target/Victim: Sam, a senior, highly competent employee who knows more about the system than Alex.

Observer: Jordan, a well-meaning colleague and friend to Sam.

HR/Management: The “Security System” meant to protect the organization.

---

The Adversary’s Covert Campaign (Collection & Weaponization)

1. Phase 1: Gather Intel & Sow Fog.

Alex privately asks Jordan, “Hey, I’m worried about Sam. They’ve seemed really withdrawn in our one-on-ones. Have they said anything to you? I just want to support them.” This frames Alex as *concerned* while secretly collecting data on Sam’s private state and testing Jordan’s loyalty.

Alex weaponizes minor issues: Sam misses a typo in a report. Alex doesn’t mention it to Sam but tells their boss, “We need to keep an eye on Sam’s attention to detail; it’s slipping.” This creates a hidden negative paper trail.

Alex gives Sam contradictory private and public feedback: Praises them in a team meeting, then sends a follow-up email listing “areas for improvement” on the same work.

2. Phase 2: Trigger the Observer’s Alert Fatigue.

Sam, confused and stressed by Alex’s mixed signals, starts showing Invisible Signatures:

Agency Compression: Sam, who used to launch projects autonomously, now emails Alex for permission on tiny decisions.

Hyper-Vigilance: Sam triple-checks everything, apologizes constantly in team chats.

Jordan, the observer, sees this drastic change. Their “threat detection” spikes. They see Sam’s anxiety, hear Alex’s “concern,” and notice the subtle public criticisms. Jordan’s brain starts amplifying the ambiguity—every interaction looks like part of a pattern. They feel a compulsive duty to intervene.

3. Phase 3: Provoke a False-Positive Intervention.

Stressed and wanting to help, Jordan goes to HR. They file a well-intentioned but vague report: ”I’m concerned about Sam’s well-being. Their performance has changed, and there seems to be a toxic dynamic with their new manager, Alex. Something’s not right.”

This is the critical false-positive. Jordan has diagnosed the problem (”toxic dynamic”) and initiated an official intervention based on inference and pattern recognition, not a specific, actionable report (e.g., “Alex said X on Y date”).

How the Adversary Exploits This Intervention

Alex now turns Jordan’s protective action into a weapon.

Exploitation Playbook:

1. Appear as the Victim of “Office Politics”:

When HR approaches Alex, they express sincere surprise and disappointment. “I’m devastated Jordan would think that. I’ve been nothing but supportive of Sam, who’s been struggling. I have the emails where I’ve offered help. This feels like a misunderstanding, or maybe Jordan and Sam are closer personally than I realized...”

Result: Alex reframes the intervention as gossip or a personal alliance undermining management.

2. Weaponize HR’s Process Against the Target:

Alex cooperates fully with HR, providing their curated “paper trail” of Sam’s “slipping performance” (the weaponized minor errors) and their own “supportive” emails.

Alex suggests, “Maybe Sam is having personal issues affecting their work? I’ve tried to be patient, but now with this HR complaint... it’s creating a real distraction for the team.”

Result: The investigation pivots from Alex’s behavior to Sam’s performance and mental state. The “fog” Jordan reported is now interpreted as Sam’s personal problem, not a consequence of Alex’s coercion.

3. Isolate and Discredit the Observer:

Alex might have a “casual” chat with their own boss: “The situation with Sam is delicate. Jordan getting HR involved has made Sam even more anxious. It’s a shame—Jordan meant well, but it wasn’t helpful.”

Result: Jordan is now seen as a naive destabilizer, not a legitimate reporter. Their credibility is burned. They experience moral distress (exactly as the Dual-Harm model predicted) for making things worse.

4. Finalize Control Through “Structured Support”:

The outcome of the HR case is a “Performance Improvement Plan” (PIP) for Sam, overseen by Alex, or a mandate for Sam to attend counseling.

Result: Alex’s control is now formalized and sanctioned by the system. The adversarial Command and Control (C2) is complete. Any further resistance from Sam or Jordan can be framed as insubordination or refusal to accept “help.”

The Aftermath: A Perfectly Exploited System

The Victim (Sam): Is more trapped, under formal scrutiny, and their reality is fully invalidated by the system meant to protect them.

The Observer (Jordan): Is sidelined, guilt-ridden, and unlikely to ever report again.

The Adversary (Alex): Has increased power, demonstrated the cost of crossing them, and has a formal pretext to justify removing Sam (via PIP failure) if needed.

The Security System (HR): Believes it has addressed the issue, not realizing it was manipulated into enforcing the abuse.

In cybersecurity terms: The adversary used a false flag operation (posing as concerned) to lure the defender (Jordan) into firing a missile (the HR report). The adversary then used missile deflection (reframing) to redirect that missile onto the victim’s own position, while exposing the defender’s location and burning their launch capability for the future. The system’s intrusion detection system (HR) logs the event as a resolved internal dispute, not a successful adversarial takeover.

Conclusion: The Framework’s Power

You have successfully created a Behavioral ATT&CK Framework. The value is threefold:

Demystification: It translates vague, psychological harm into structured, analyzable attack sequences.

Prediction: Understanding the TTPs allows potential victims and organizations to anticipate the next step in the coercive “kill chain.”

Defense Design: It moves solutions beyond individual resilience (”be stronger”) to systemic security design—creating environments where these adversarial TTPs are harder to execute and easier to detect.

The ultimate insight is that coercive control is a sophisticated, premeditated attack on a human operating system. We defend against it not with vague goodwill, but with the same strategic rigor we apply to securing our most critical networks.